Today’s software testing trends show the growing demand for more efficient and automated API testing. Manual testing is not only time-intensive for internal testing teams, it can also lead to poor customer experiences. When manual testing processes cannot proactively discover issues, your customers may inevitably be the ones finding them.

Many of the current test automation solutions today focus on the UI, while most API-level testing is still done manually. As more companies focus on creating highly efficient and agile environments, testers are in need of easy-to-use, intelligent, and automated API testing tools.

With this in mind, we’ll dive into the key considerations for successful API testing as well as various testing methodologies. Then, we’ll take a look at some of the best API testing tools available on the market today.

What is API Testing?

API testing involves a provider employing various tests to confirm their Application Programming Interfaces (APIs) function correctly, perform efficiently, are reliable, and maintain stringent security standards.

API tests often focus on a wide range of specific domains, including tests that validate API responses, ones that measure performance and load testing, and even testing efficiency in cross-API communication.

These tests are then often ingested into a comprehensive reporting infrastructure, allowing developers to use these findings to guide new development, mitigate systemic issues, and derive data for additional testing and management across the entire API lifecycle.

It’s crucial to understand that API testing significantly diverges from other forms of testing, such as UI testing. The primary focus of API testing is on the functional aspects of the API itself. This means that API testing is more concentrated on ensuring the proper functioning of APIs rather than the aesthetic rendering of a UI across different environments. It’s best to think of API testing as an infrastructural consideration, as these tests focus more on operational factors.

The definitive guide to production traffic replication and replay for software testing

What Are the Types of API Testing?

There are different types of API testing, each of which have their own benefits and drawbacks. Often, multiple types can be combined to make for more comprehensive API testing, ensuring comprehensive coverage of the entire API.

Functional Testing

Functional testing is the core approach of validating API functionality. These tests can include solutions such as unit testing and integration testing, where you focus on the capabilities of the executed code rather than its performance.

Functional testing will often be the first type of testing you implement, as these tests are the quickest and most efficient ways of testing APIs. The drawback, however, is that these sorts of tests only give you a sense of how each unit is performing in isolation, meaning that you can’t really get a sense of the actual performance of code changes. This big gap in understanding is where non-functional testing comes into play.

Non-functional Testing

Non-functional testing is the next step after verifying that all features work as expected. From here, you can start looking into the performance and stability of those features. Non-functional tests can verify the capabilities and reactions of your application under various conditions, allowing for a granularity in testing scenarios that reflects changes and variables that you can’t really test in regular functional testing.

For instance, API load testing can help to verify your application’s ability to handle a given load. Stress testing can verify whether it can handle traffic spikes. Performance testing as a whole provides important and useful insights into your application’s performance — like measuring response times, throughput, and resource usage — before pushing any changes to production.

These test scenarios can be scaled to different realities, and is very effective at testing hypotheticals to ensure that your API is robust, scalable, and extensible.

How to leverage user traffic to automate load testing

Security Testing

Functionality and performance are arguably the most important aspects of an application to test from an operational point of view, but thorough testing requires other focuses to be validated and bolstered. Even the best functioning API is worthless if it has zero security, as this can undermine trust, expose data, and threaten the system as a whole.

Security testing, therefore, is a huge focus for many providers. Security testing can take multiple forms. Penetration testing, also known as pen testing, is a form of testing that simulates attacks on a system to test its security. Vulnerability scanning can help detect issues within the functional deployment itself, pointing towards potential misconfigurations, authentication and authorization issues, and so forth.

What is important is to ensure that security testing in particular is comprehensive. Comprehensive security testing, especially testing that is layered, will result in much more secure APIs than a solution that is singularly focused. Your tooling should also support a wide range of programming languages and automated testing to ensure that this testing can be replicated and extended moving forward, especially if you intend on using automated testing to any useful degree.

Today, you can get by with some automated tools like Snyk. Snyk is a tool that helps detect vulnerabilities by analyzing the code and its dependencies, then remediating it by automatically creating pull requests.

Regression Testing

While it’s important to test new features, it’s perhaps even more crucial to verify that existing features aren’t experiencing decreased performance—also known as regression.

Implementing regression testing in your pipeline involves re-running previously executed tests, to ensure that new changes aren’t negatively impacting current features. Or, it can involve recording performance metrics such as transactions-per-second (TPS) in production, and comparing them to the metrics collected during a load test.

Whichever way you choose to implement it, regression testing is an important aspect of maintaining high quality and reliability as your API evolves.

Production traffic replication and replay for software testing

Key Factors to Consider in API Testing Tools

When evaluating API testing tools, you should consider a few key factors to ensure they meet your use case and specific requirements.

Usability and Learning Curve

The tool that you choose should have an intuitive interface, especially if your team has varying technical skills. It should offer comprehensive and clear documentation in addition to tutorials and other supportive materials to help get the system deployed in your organization.

Supported Protocols and Formats

Your tool should support the protocols that you use as well as the paradigms you’ve deployed. Using a SOAP specific tool might not be helpful in a RESTful deployment, for example. You should also ensure you have ample compatibility with data formats like JSON, XML, or others that your APIs rely on.

Testing Capabilities

When considering your tooling of choice, you want to make sure that it can support multiple testing approaches, including:

- Functional Testing – the ability to test basic functionality and expected outcomes.

- Performance Testing – the tools should allow you to test API performance under various loads.

- Security Testing – look for tools that identify vulnerabilities such as authentication flaws, injection attacks, or data leaks.

- Automation – support for scripting and automated test case execution.

- Data-Driven Testing – ability to test with multiple data sets for thorough validation.

Integration with CI/CD Pipelines

Ensure your choice of tool integrates seamlessly with continuous integration/continuous deployment (CI/CD) tools like Jenkins, GitLab CI/CD, or GitHub Actions. API testing should fit naturally into your DevOps workflow. You should also ensure that the tool is compatible with your operating systems, programming languages, and frameworks.

Scalability

The tool should handle increasing test cases or complex scenarios as your application grows, so you want to look for something that has ample support for horizontal and vertical scaling.

Making a Good Testing Regiment

Creating a good user experience is at the core of making a good product, and an effective testing process can play a key role in ensuring that no user experiences issues with your product. With the right API testing tools and processes, you can verify reliability, performance, and security as part of the software development process rather than waiting for user reports.

In this section, we cover the essential elements that will ensure a successful testing practice.

Determine your testing goals

Identify what you aim to test. This determination is foundational to shaping your testing strategy. If you’re just starting out, unit tests and integration tests are usually the first types of tests you’ll execute, as they can ensure the basic functionality of your application.

Then, you’ll likely move onto more advanced testing, like performance testing, which includes API load testing.

Automate API testing

Automating parts of the testing process allows your testing teams to bypass tedious manual tests, execute with consistency, catch bugs early, increase your test coverage, and ensure repeatability. In the software development world—especially with cloud applications and microservice environments—it’s easy to assume that test automation inherently means integration into CI/CD pipelines. However, test automation can be as simple as writing a test script to ensure consistency between test runs.

Many companies are performing automated tests already, such as creating a test script to execute a series of sequential commands that would otherwise have to be run manually, or automating most of the test creation and execution.

Use real-world data

Real-world data ensures that your tests provide as much end-user insight as possible and reflect true production conditions. This is especially crucial once you start integrating tests more deeply into your infrastructure.

Considering how varied user behavior and data is becoming in the modern world, using real-world data is important. For many companies, it’s no longer enough to generate a few rows in a database for testing—it simply won’t simulate real-world conditions accurately enough when accounting for the complexity, scale, and variability of data experienced in modern production environments.

How to leverage user traffic to automate load testing

Consider continuous implementations

Adopting automated API testing is a crucial part of improving developer efficiency, as it subsequently delivers high-quality software and reduces time-to-market. Implementing this in a continuous manner, however, takes these benefits one step further.

The automation of testing makes it much easier for developers to run more reliable tests, but you’re still relying on those tests being executed manually. The true power of automated API testing comes from being able to create gated checks as part of your pipeline, ensuring that all code meets certain criteria before it can be deployed to production.

This is why you should consider continuous implementation of your tests, to ensure that even the more advanced testing methodologies, such as performance testing, are being performed as part of new pull requests in some capacity.

Configure a testing environment

Setting up a dedicated test environment is absolutely critical, especially once you start incorporating continuous testing. Separating the surrounding environment of different tests allows for isolation and evaluating your application under controlled conditions, without the possibility of interference from ongoing development or other developers’ testing.

A well-configured test environment should simulate production as closely as possible, which includes software, hardware, network configuration, and, perhaps most importantly, data. It’s already been mentioned in a previous section, but the importance of realistic data in testing simply cannot be overstated.

Collect and analyze data

So far, any mention of data has referred to the underlying data used by the application during testing. However, the tests themselves are, of course, going to generate a variety of data as well—data determining the success of your tests.

During test executions, you should gather information about typical metrics such as CPU usage and RAM usage, infrastructure-specific metrics such as number of Pods when testing autoscaling in Kubernetes, errors and anomalies that happen during testing, performance metrics such as transactions-per-second (TPS), etc.

The point is, simply running a test and verifying that the application can run is rarely enough. In tightly-coupled microservices where latency can build up, collecting and analyzing performance metrics is crucial. If your application can successfully handle all requests you generate, but all with a response time of 2 seconds, is that still a success for you?

Implement automatic mocks

Most of the principles mentioned above can only be truly efficient and realistic by implementing automated mocks.

How can you create a separate environment for each test, seed realistic data, and execute it on every pull request? There are many ways of doing this using common tooling:

- Use Docker containers to create reproducible dependencies, and seed data by either importing it from external files, or generating it with scripts during the build process

- Deploy new Kubernetes clusters with lightweight tools such as K3s

- Create new git branches for each test, including the data in your new branch

- Use tools like Terraform or Azure ARM templates to dynamically spin up new infrastructure and tear it down

The above are just a few examples of the many ways to create test environments. But they all lack one key aspect: efficiency. For every option that has to seed data, how do you keep it up to date? For every option deploying a new instance of dependencies, how do you keep them up-to-date? It’s possible, but it requires a lot of engineering hours.

The optimal way of creating these isolated environments—also known as preview environments—is to use mocks that are automatically created. Mock servers have the benefit of being almost entirely static, with no other logic than a matching algorithm that determines the most appropriate response to a request. This allows mock servers to be run using very few resources, while also being much less complex than creating development instances of actual applications.

But, it’s important that these mock servers are configured and created automatically, as there’s still the concern of seeding data. One approach is to continuously record traffic from your production environment—both incoming and outgoing—then using the recorded outgoing traffic (and the responses) to generate your mock servers. This approach combined with the use of recorded incoming traffic is known as production traffic replication.

Top 7 Best API Testing Tools of 2024

Below, we provide a curated list of some of the most well-regarded API testing tools, highlighting their advantages and the scenarios where they are most effectively used for API testing.

Speedscale

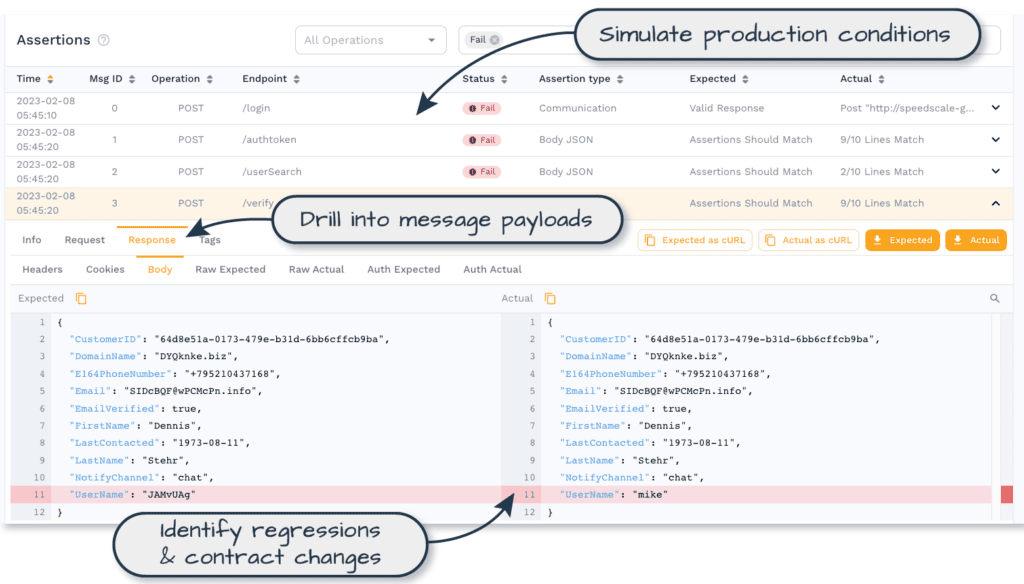

Speedscale is one of the most recent additions to the automated API testing tools market, and also one of the most revolutionary, born out of a simple idea: making a tool that can ensure API updates won’t break services or apps.

With so many connection points between different applications, ensuring quality without a lot of manual work is almost impossible. Developers should be able to accelerate their release schedules by performing test automation with real traffic.

Speedscale was developed with API testing best practices in mind. Speedscale’s key capability uses realistic data in a powerful and efficient way, allowing teams to see and fix issues before release.

The platform builds QA automation without any scripting required, and runs traffic-based API tests for performance, integration, chaos testing, and more. While other tools require scripting and observation, Speedscale allows developers to preview their app’s functionality under production load in mere seconds. This allows you to perform rapid tweaking and run isolated performance tests. Additionally, Speedscale gives developers realistic insight into application behavior without relying on users. The software builds mocks automatically from API traffic and shows how the app performs in real-world conditions by simulating non-responsiveness, random latencies, and errors.

Speedscale focuses solely on Kubernetes and uses Kubernetes-specific features. For example, setting up the tool requires installing an Operator into your cluster. After that, you instrument your applications with the Speedscale sidecar proxy. This proxy takes care of recording incoming and outgoing requests (taking great care to prevent PII from being stored) and then stores it in their single-tenant, SOC2 Type 2 certified architecture, rather than requiring you to manage the data yourself. This approach allows you to record traffic in one environment and replay it in another—record in production, replay in development—without configuring direct access between the clusters.

After storing the traffic, users can explore and filter tests and mocks that are generated based on this traffic. Because all the data is stored and managed by Speedscale, and all actions (such as traffic replay and automatic mock creation) are handled by the Operator installed in your cluster, integrating performance testing in your CI/CD pipelines is a simple task. One major benefit of Speedscale is how it integrates with other tools, even ones on this list. You can record traffic with Speedscale, then export it to a Postman collection. You can also quickly create performance and regression tests from a Postman collection.

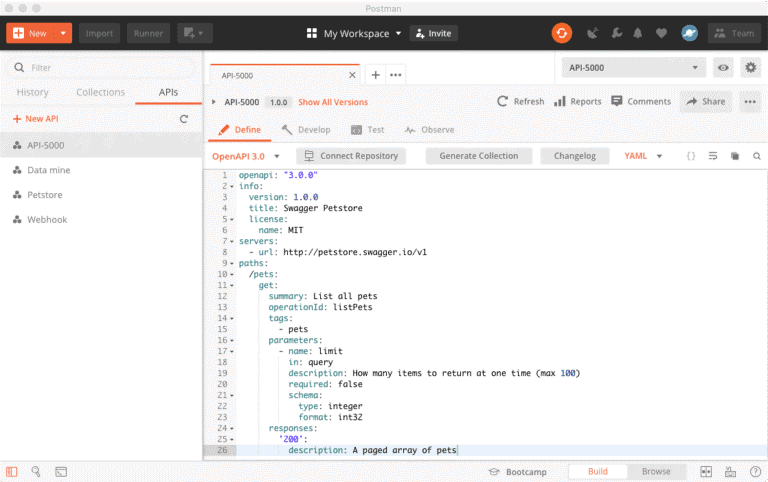

Postman

Postman is among the most popular API testing tools—and for good reason. Its collaboration platform has gathered more than 20 million developers working across 500,000 companies across the world, allowing them to streamline collaboration and simplify every step of API building.

One of Postman’s main advantages is its ease of use. It’s quick and painless to set up, while being reasonably priced at just $12 for the basic package.

Postman allows you to quickly share APIs and easily test method calls. In fact, users can save collections and share them with their team. It’s seen by many web developers as an essential tool, given its key features.

However, some users have found the reporting capabilities to be somewhat lacking, making Postman more useful for quickly firing off local requests or keeping an organized collection of the requests associated with your application. Once you move into automated API testing—and especially continuous testing—using Postman may be labor-intensive.

Postman is better suited for functional testing, while tools like Apache JMeter may be a better fit for performance testing.

While the sharing capabilities within a single team are great, there’s a lot left to be desired when it comes to sharing with non-workspace users. The complex nature of the platform results in some of the more advanced features practically being “hidden” unless you get far enough on the learning curve.

All things considered, Postman isn’t a bad choice for an API testing tool. While the getting started guide provided by the platform isn’t as in-depth as you might like, there’s plenty of information online that can help you master the tool.

Postman load test tutorial

Postman is also an excellent way to quickly set up mock APIs, allowing developers to design and test on the fly using the platform’s mock servers. Within Postman, you can make requests without a production API ready to go, as it’s easy to create mock servers based on API schemas. However, there’s no native way of seeding data based on production traffic.

Above all else, its teamwork features are definitely the platform’s main draw, as well as the ability to quickly create and execute API requests. If you need an easy way to share API requests or an easy alternative to running curl commands, Postman is likely to be a good choice. But in terms of API test automation, you may find another tool more suitable.

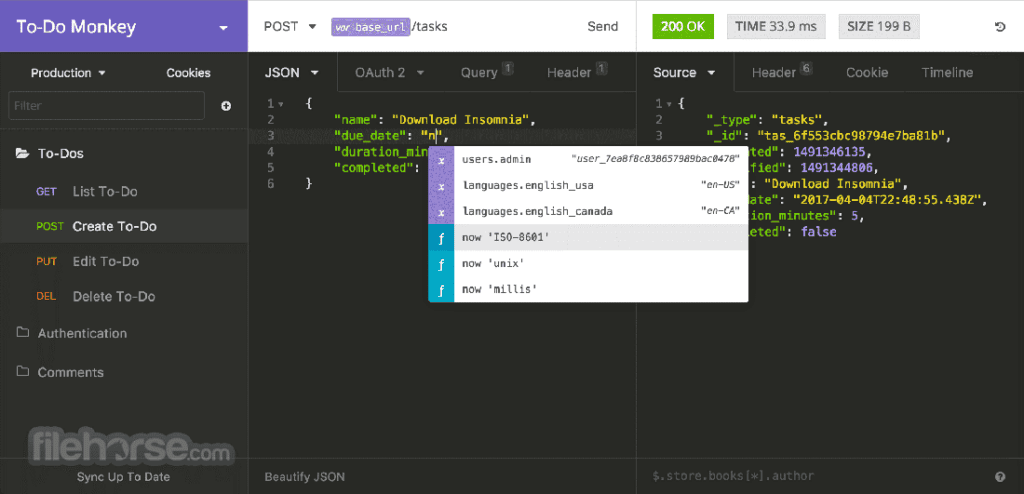

Insomnia

Insomnia is a modern, user-friendly API testing tool mainly positioned as a competitor to Postman, meaning that it offers many of the same benefits as Postman, as well as many of the same limitations.

Insomnia’s biggest difference is how it approaches the user interface and user experience, focusing on providing a more streamlined and efficient experience. This proves to be very useful in a variety of ways, as users of Postman often find it too bloated, whereas Insomnia is more minimalist and often easier to start with.

This does create some limitations for Insomnia, though. For example, both tools allow for request chaining; however, Insomnia requires you to run one request at a time, while Postman allows you to run an entire collection of requests at once.

In the context of API testing essentials, Insomnia is great at providing a simple and minimalist user interface, which most developers will find makes for a more efficient workflow. However, it has the same limitations of Postman, as creating automated and continuous tests is limited. Overall, Insomnia is a great tool depending on your use case.

Kubernetes Load Test Tutorial

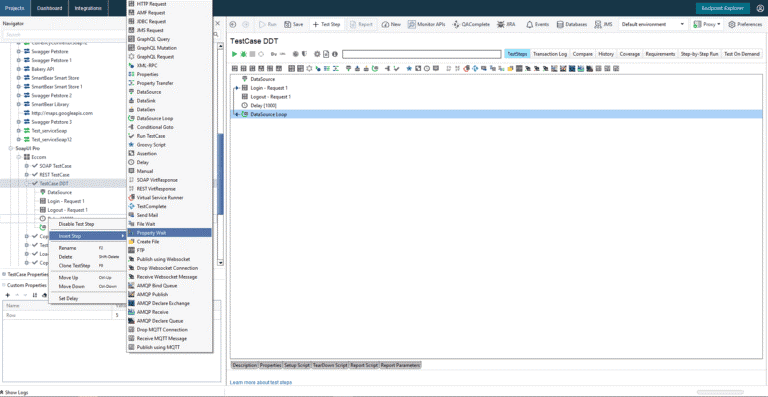

SoapUI

SoapUI’s functionalities are useful for all web service developers, with excellent support from its own development team, who are quick to jump on any user-reported bugs.

There’s a free, open source version of SoapUI and a premium paid version. That being said, even the open source version can be useful for creating efficient web service mocks without a single line of code—all you need to do is point to the WSDL file. Still, this will be of limited use without the premium version, because most of today’s applications are supported by RESTful web services and not SOAP, meaning they aren’t defined with a WSDL.

Apart from that, it is quite powerful when it comes to complex test suites and simple mocks. Plus, you can use the Groovy script to write Java-ish code and manipulate responses and requests for your web services. You can even directly access a database and confirm the content if it’s been modified by a web service—an incredibly valuable tool for the testing and development of web services.

However, it’s not without its downsides. If you’re using the free version, there are still plenty of advanced features, but API documentation is incredibly poor. You’ll basically need to go through various forum posts to learn to use the more powerful options provided by the Groovy script functionality. You’ll likely need to resort to trial and error and piece a lot of it together yourself.

Also, there seem to be some issues with the memory usage of SoapUI—it can grow to the point of crashing the program. You can alleviate this somewhat by allocating more memory to the program on startup and tinkering with logging levels, but it’s still a significant stability issue.

At the end of the day, SoapUI is a good choice if you want a simple mock service—or a complex one that’s not easy to use, and you’re ready to put time and energy into. However, if you do learn its ins and outs, SoapUI offers a lot of power and functionality as an API testing tool.

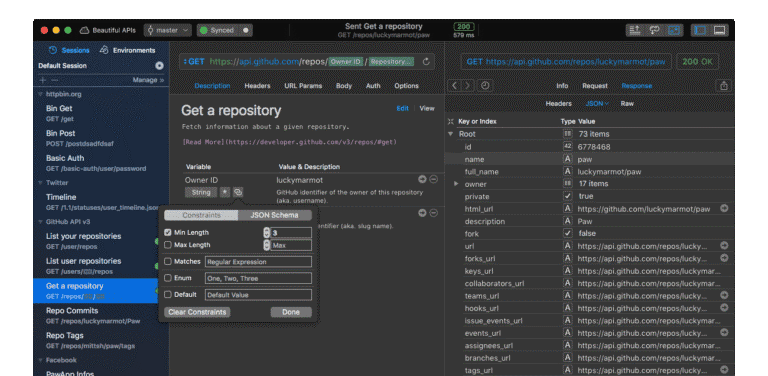

Paw

Paw works extremely well with GraphQL, and the free tier offers more advanced options than most of its competitors, though it is only compatible with Mac devices. It’s a full-fledged HTTP client for testing your APIs, with an extremely efficient interface that makes exporting API definitions, generating client code, inspecting server responses, and composing requests quite easy.

If you’re a macOS user, you’ll find that the likes of Postman or Insomnia don’t come close to Paw—especially in terms of GUI design and ease of use. One of the biggest issues with most other API testing tools is their cluttered UI (or, in the case of REST Assured, a lack thereof). However, Paw manages to sidestep this problem by providing an aesthetically-pleasing and intuitive UI. Its variable system was made with great care, as was the JS-based plugin system that’s incredibly easy to use.

Paw allows you to quickly organize requests, sort by different parameters, make groups, etc. It has great support for S3, Basic Auth, and OAuth 1 and 2, and it can easily generate JWT tokens as well. Many developers appreciate the ease with which they can generate usable client code with Paw—you just need to import a cURL and modify the request, and PAW will help you generate the code for Python, Java, etc.

As we’ve mentioned, it’s quite easy to use—especially with a wide range of smart mouse and keyboard shortcuts. However, while the exclusive support for Mac devices means it is more narrowly focused and succinctly designed, this is also one of Paw’s biggest downsides.

The lack of support for Windows and Linux devices means that a lot of developers who prefer these operating systems will be left out of the Paw experience. Also, a big letdown is the lack of mock API features—meaning you’ll need another software from our list to do that. Finally, the extremely high per-user price also means it’s not the most affordable solution for larger teams.

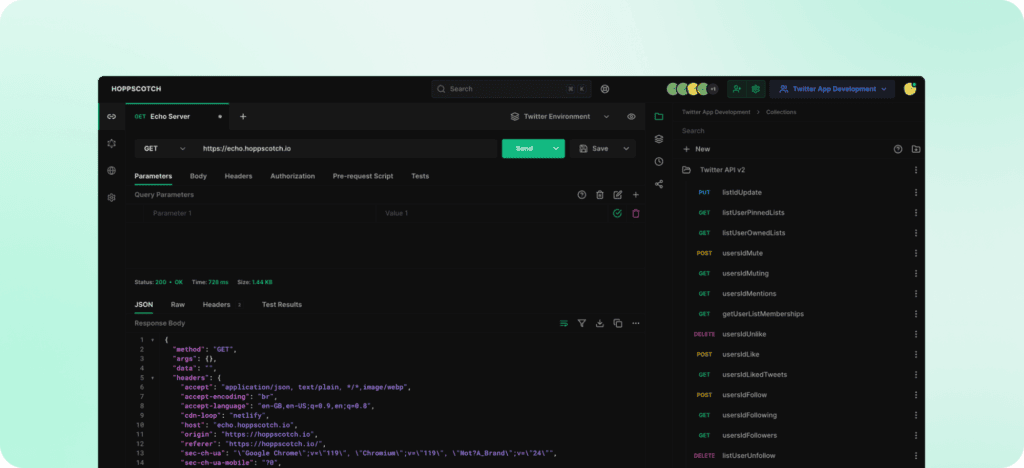

Hoppscotch

Hoppscotch is a lightweight and open-source alternative to Postman. Formerly referred to as Postwoman, Hoppscotch is browser-based, eliminating the need for installation and allowing cross-platform support through any modern web browser.

Hoppscotch supports REST APIs, GraphQL with schema introspection, and WebSocket testing, making it a really versatile option for a wide range of API protocols. It offers great support for environment variables and dynamic requests, making testing across multiple environments quite simple.

The tool itself is minimalist, which makes it quite fast, but its simplicity can be a drawback for some teams that need more advanced feature sets. That being said, it offers pretty great support for collaborative systems, and boasts version tracking, collection organization, multiple authentication methods, and more.

Notably, since it’s an open-source solution, it is highly customizable, which means that its simplicity can be iterated upon for a variety of use cases, specific testing regimes, and demands.

Compared to Postman, Hoppscotch is simpler and lighter, which is ideal for developers who prioritize speed and simplicity over extensive features. While Postman offers advanced team collaboration and more extensive functionality through its paid plans, Hoppscotch is entirely free and community-driven, which can offset some of the headache of adoption. For developers looking for a fast, easy-to-use, and open-source API testing tool, Hoppscotch is an excellent choice.

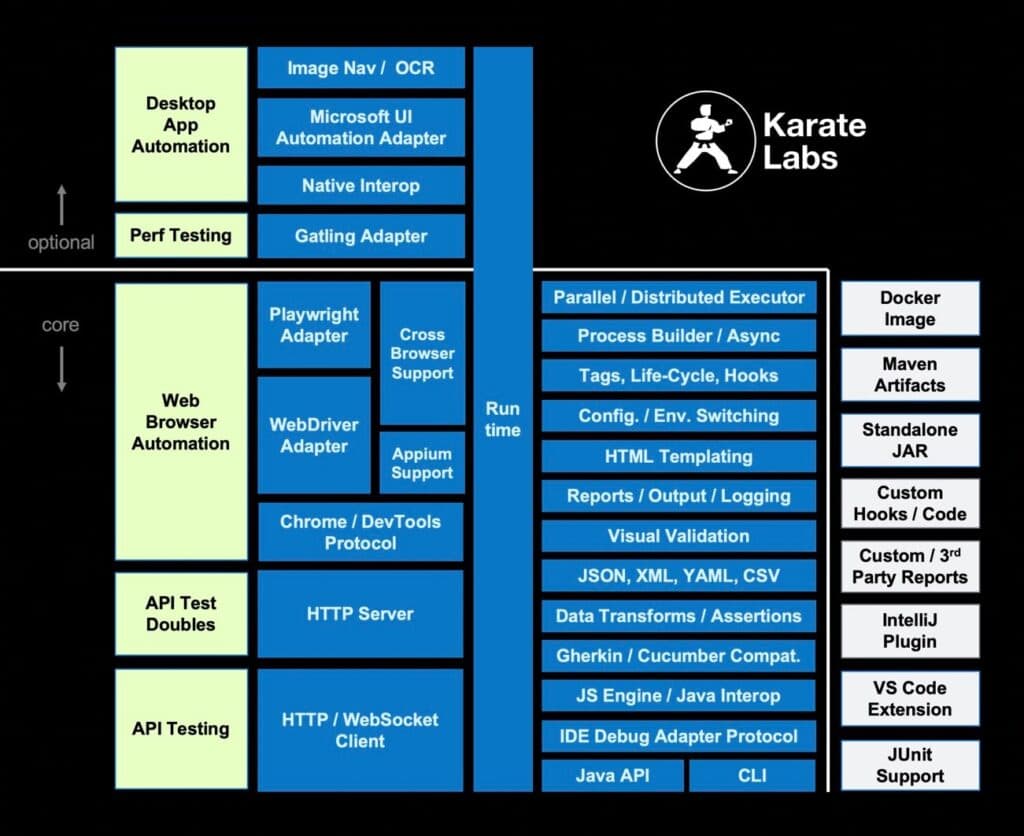

Karate

Karate Labs is an open-source automation platform that integrates a wide range of functions, including API testing, API performance monitoring, mocking, and UI validation into a single framework. Its language-neutral syntax is designed for simplicity, making it accessible even to non-programmers.

The platform includes built-in assertions and HTML reports, and supports parallel test execution to enhance speed and efficiency. The Karate platform also has some optional components which can be utilized to handle additional complex functions such as desktop application automation, although these are often paired as part of the comprehensive offering.

Karate Labs offers a cross-platform stand-alone executable, eliminating the need for code compilation. Users can write tests in a straightforward, readable syntax tailored for HTTP, JSON, GraphQL, and XML. Additionally, the framework allows for the combination of API and UI test automation within the same test script. For those who prefer programmatic integration, a Java API is available to leverage Karate’s comprehensive automation and data-assertion capabilities.

The platform has seen wide adoption in the market, with over 2 million monthly downloads and usage by more than 550 companies. Karate Labs boasts that its users report significant time savings with tests that execute faster and with easier integration.

Conclusion

By combining the five testing methodologies discussed in this blogpost, you can create a robust and efficient testing pipeline that can realistically help you identify and resolve issues, enhance performance of your cloud applications, and ensure the security of your APIs.

The challenge now is to figure out how you can best and most effectively implement these methodologies, and choosing the right tool plays a major role in solving this challenge.

Once you’re done implementing these methodologies and your API is continuously being thoroughly tested, you can start looking into how this opens up the possibility of preview environments.

Sign up for a free account with Speedscale. This will give you access to the full suite of functions and tools for 30 days, allowing you to grasp the benefits of using Speedscale for API testing.