Why Autonomous AI Agents Can't Run on SaaS Infrastructure

The SaaS Model Was Never Built for Autonomous Agents

The era of the “copilot” is ending. We are moving rapidly toward the era of the autonomous software factory, where autonomous agents don’t just autocomplete our code—they investigate, plan, test, and merge entire features while we sleep.

But this shift has exposed a critical flaw in how we consume AI.

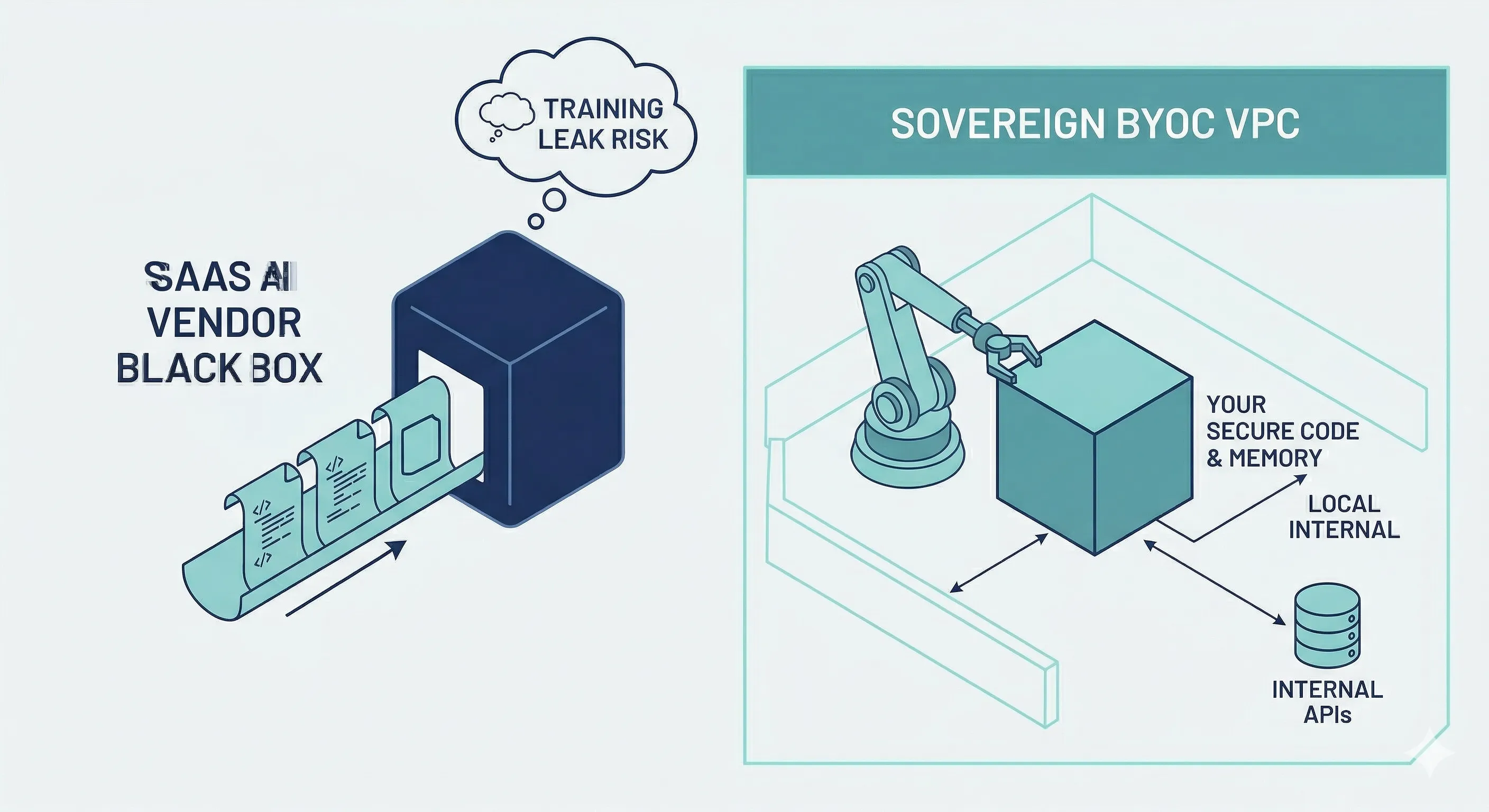

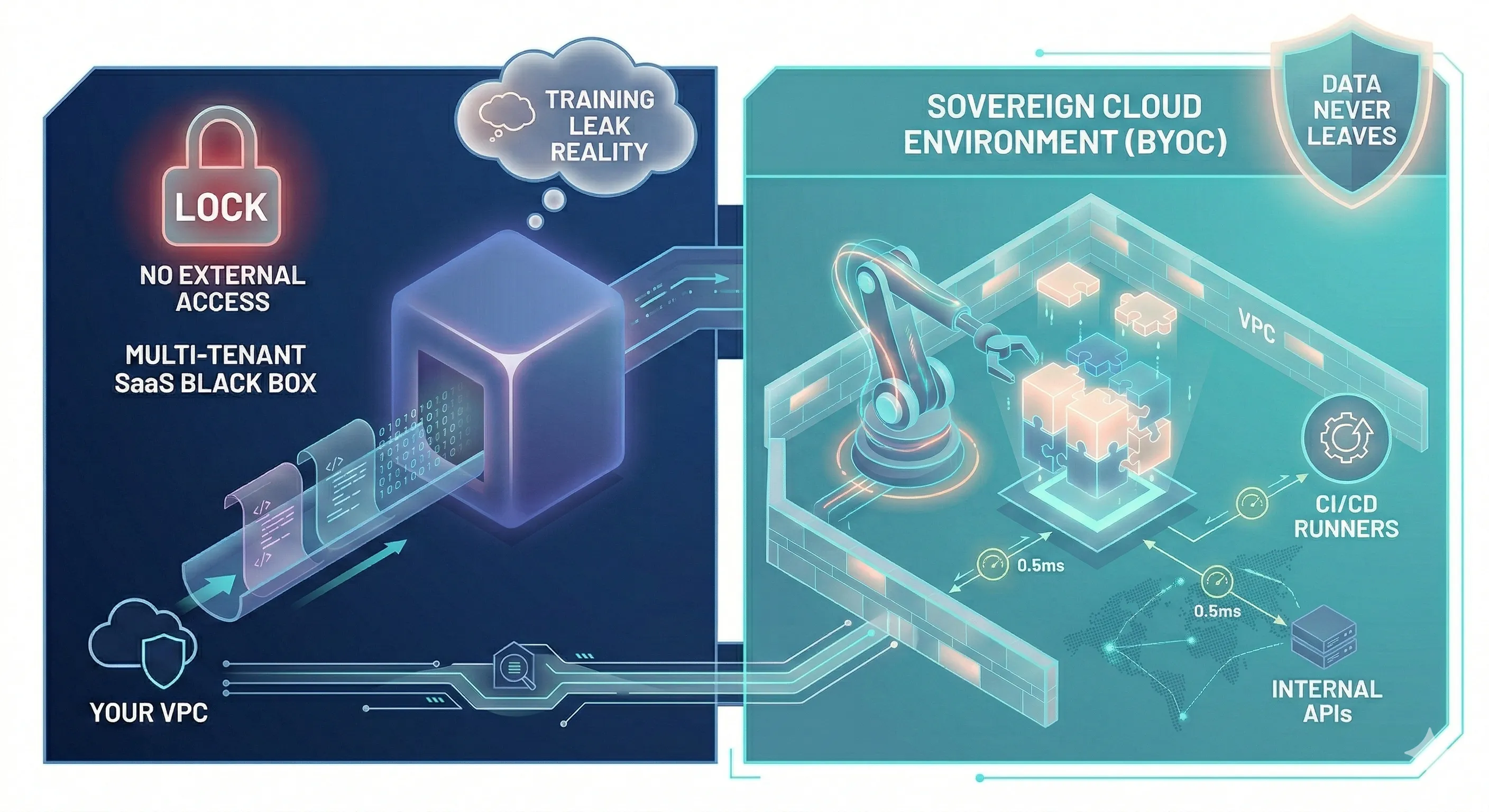

For the past decade, the default motion for enterprise software has been SaaS. It’s easy, frictionless, and managed by someone else. However, when you introduce autonomous agents that need deep, continuous access to your codebase, your private data, and your internal APIs, the SaaS model breaks down. Bring-Your-Own-Cloud (BYOC) is the architectural response.

The enterprise is waking up to the “Training Leak” reality: you cannot pipe your most valuable intellectual property through a multi-tenant SaaS black box and expect to maintain a competitive moat.

To fully realize the vision of autonomous agents, engineering leaders are realizing they must own the ground where the intelligence lives. The shift to BYOC is already underway.

The Hosting Spectrum: SaaS, On-Prem, and BYOC

When evaluating how to deploy AI infrastructure and agentic workflows, organizations typically look at three models:

| Model | What it means | Advantage | Disadvantage |

|---|---|---|---|

| SaaS | Vendor hosts in their cloud | Zero maintenance, easy to start | Data leaves your VPC; internal systems must be exposed to the internet |

| On-Premise | You run on your own servers or data centers | Air-gapped isolation, ultimate security | Hardware, updates, and scaling require a full IT ops team |

| BYOC | Vendor delivers software; you run it in your AWS/GCP/Azure account | SaaS-like ease with on-prem security; data never leaves your VPC | Requires cloud-native infrastructure (Kubernetes) |

For the autonomous AI software factory, BYOC isn’t just a deployment preference: it’s a strict architectural requirement. Here is why.

Why Autonomous Agents Demand BYOC

1. The Sovereignty Mandate: Protecting the IP Moat

If an autonomous agent is reviewing your proprietary source code, analyzing customer behavior data, and querying internal databases to resolve a Jira ticket, that data is your company’s lifeblood.

In a SaaS AI model, you are sending this context across the public internet to a third party. Even with strict enterprise agreements, there is a lingering fear of the “Training Leak”: the risk that your proprietary logic will inadvertently influence the weights of a public foundational model, effectively giving your competitors access to your secrets.

But there is an even bigger, more insidious risk: data gravity. Over time, as your agents build memory, context, and vector databases of your internal operations, letting your AI provider access and manage all that data long-term becomes a massive liability. If you ever want to switch models or providers, you’re locked in. By storing your agent’s memory and operational history in a SaaS black box, you are effectively letting another company hold your institutional knowledge hostage. Data sovereignty is already a hard requirement across regulated industries, and AI agents extend that requirement to every organization that writes software.

Data sovereignty requires that data stays within the geopolitical and network boundaries you control. BYOC allows you to run open-source models or dedicated, isolated instances of commercial models (like AWS Bedrock or Azure OpenAI) where you cryptographically guarantee that your data is not used for external training, and, just as importantly, where you retain complete ownership of your AI’s long-term context.

2. The Proximity Paradox: The Inner Loop of Autonomy

When a human uses an AI chat interface, a 2-second response latency is acceptable.

When an autonomous agent like Speedy is running a tight “Inner Loop”—navigating a codebase, executing test suites, querying microservices, and evaluating logs hundreds of times a minute—latency is fatal.

If your agent is hosted in a vendor’s SaaS environment, every API call it makes to your internal systems must traverse the public internet. Shipping 10MB of context back and forth for every reasoning step introduces massive network overhead.

BYOC solves the Proximity Paradox. By hosting the agent in the same VPC as your databases, your CI/CD runners, and your internal APIs, you reduce latency to sub-millisecond levels. The agent operates at the speed of your internal network, which makes true autonomy possible.

3. Inference Unit Economics

SaaS providers package compute, software, and margin into a single token price. When you are paying per token for sporadic human-AI interactions, this makes sense.

But agents are token-hungry. They run in continuous loops, generating massive amounts of context to evaluate paths, debug errors, and synthesize results. At scale, the “SaaS Tax” on AI inference becomes astronomical.

BYOC allows enterprises to reclaim their margins. By running the inference and the agent orchestration in your own cloud, you can use reserved compute instances, spot instances, and specialized hardware pricing agreements with your cloud provider. You control the unit economics of your AI factory.

The BYOC Ecosystem: Off-the-Shelf, In-Your-Cloud

The shift to BYOC is creating a new category of vendors that prioritize the “Delivery Agent” model over the traditional SaaS model.

Companies like Replicated are pioneering this space, providing the infrastructure that allows software vendors to securely deliver, manage, and update complex Kubernetes applications inside their customers’ air-gapped or BYOC environments. This enables AI startups to offer the ease of SaaS while respecting the security boundaries of the enterprise.

At the foundation of this stack is open source. Open-source models, orchestration frameworks, and vector databases provide the core building blocks that organizations can compose into their own sovereign AI stacks, completely free from vendor lock-in.

Operationalizing the Sovereign Stack: The Testing Dilemma

Architecting a BYOC AI factory is one challenge; operating it safely is another.

When an autonomous agent is wired directly into your internal services, how do you test it? You cannot let an untested agent loose on your production databases. However, because the agent relies on the complex web of your internal APIs to function, traditional static mocking is insufficient.

This is where the observability and testing strategy must evolve. You need a way to test the agent in a realistic environment without the risk of production impact.

By capturing real production API traffic and using it to generate dynamic, stateful mocks, Speedscale creates a production context that mirrors your live environment. Deploy this within your BYOC sandbox and the agent can interact with highly realistic API responses, validate its reasoning loops, and prove its safety before it ever touches live systems.

Common Questions About BYOC and AI Agents

What is BYOC for AI agents? BYOC (Bring Your Own Cloud) means the AI agent software runs inside your cloud account (your AWS, GCP, or Azure VPC) rather than in the vendor’s multi-tenant environment. You control where the data lives and who can access it.

How is BYOC different from traditional on-premise deployment? On-premise means bare-metal servers you buy and maintain. BYOC uses your existing cloud infrastructure. The vendor handles software updates and delivery; you retain control of the data boundary without the hardware overhead.

Why does latency matter so much for autonomous agents? A human tolerates a two-second AI response. An autonomous agent running a development loop cannot. Every API call the agent makes to your internal systems adds up. If those calls cross the public internet, latency compounds and kills throughput. Hosting the agent inside your VPC eliminates that overhead entirely.

The Inevitable Architecture

The transition to AI-driven software development is not just a tool change; it’s a fundamental architectural shift.

While SaaS AI tools are excellent for individual productivity, the enterprise autonomous agent demands the security, latency, and cost control that only BYOC can provide.

For engineering leaders, the strategy is clear: start exploring with SaaS to prove the value, but architect your systems, your observability, and your testing frameworks for the inevitable move to BYOC. The companies that win the next decade will be the ones that own the ground where their intelligence lives.

If you’re building the testing layer for your BYOC AI factory, Speedscale’s production traffic capture gives your agents a realistic API environment to validate against before they ever touch live systems.