Prompt, Deploy, Pray Is Dead: Validating AI Code with Proxymock

Recent outages tied to AI-assisted code changes have pushed companies into a corner. After several incidents with massive “blast radius” impacts, organizations like Amazon introduced stricter controls—mandating that senior engineers manually review all AI-generated code before it hits production.

That response makes sense on paper, but it exposes a fatal flaw in the modern development pipeline. If every AI-generated change requires an extensive, manual senior-level review, the ROI of using AI to generate code plummets. The time saved writing code is immediately wasted trying to understand and validate it.

The AI Review Bottleneck

AI tools can pump out massive amounts of code quickly—sometimes far more than a human would naturally write in a single change. Suddenly, senior engineers are expected to evaluate code they didn’t write and may not fully understand at first glance. They have to consider architectural implications, failure modes, retry logic, cascading failures, and deep edge cases that only appear under real traffic.

The review process is critical for safety, but it introduces massive friction. Relying purely on human eyeballs to catch AI hallucinations doesn’t scale.

Code Archaeology vs. Behavioral Validation

To safely deploy AI-generated code, you must stop asking, “Does this code look correct?” and start proving, “Does this code behave correctly when exposed to real production scenarios?”

The review bottleneck exists because we’re asking humans to do what machines are better at. We can validate software not just by reading the code, but by validating the behavior.

You don’t need to turn every AI update into a painstaking exercise in manual code archaeology. You don’t need to manually decipher the architecture.

You Need to Use Proxymock

If you are deploying AI-generated code without validating exactly how it will react to real-world conditions, you are going to have an outage. It is just a matter of time.

Relying purely on manual code review will eventually fail. The real question is:

- How do you prove AI-generated code actually works without turning every change into a manual code archaeology exercise?

- How do you ensure your code handles failure modes?

- How do you avoid the “blast radius”?

You must validate the behavior of the new code before you deploy it.

Relying on “Prompt, Deploy, Pray” is not a strategy. It’s just gambling with your production stability.

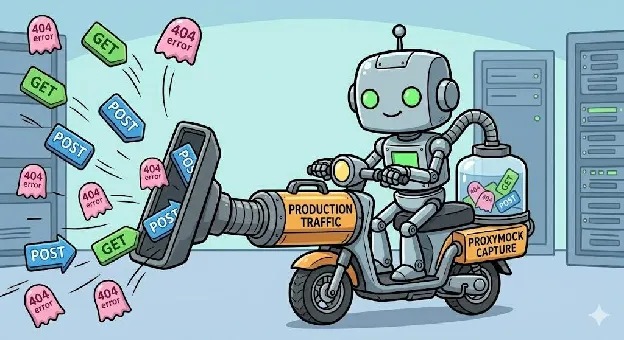

The Solution: Validate Behavior with Proxymock

To safely deploy AI-generated code, you need a different kind of safety net. You need Proxymock.

Proxymock (by Speedscale) shifts the validation away from human interpretation and grounds it in production reality. Proxymock captures your actual production interactions and allows you to replay them locally, in test environments, or in CI pipelines. It automatically records API and database traffic and turns those interactions into realistic mocks and tests.

Your production systems already contain the best test data available: your real user traffic. That traffic represents real API calls, real edge cases, real integration behavior, and real dependency responses.

Production Traffic as the Ground Truth

Validation with Proxymock is straightforward:

- Same Inputs: Replay exact production traffic against the new AI-generated code.

- Expected Outputs: Verify the service produces the correct results and behaves exactly as it should.

- No Regressions: Catch the failures instantly, before they impact your users.

Confidence That Your Code Actually Works

AI coding dramatically increases code velocity, but velocity without automated validation just means you’re increasing risk velocity.

The lesson from recent outages is that faster code generation requires faster validation. Manual review alone doesn’t scale well. Behavioral validation does. Proxymock provides a confidence level that code review alone can’t guarantee: the confidence that your code will work under real conditions. See how AI code verification works at the platform level.

Stop spending hours trying to understand large blocks of AI code. Replay real production interactions and confirm that new implementations behave correctly. Use Proxymock from Speedscale to automate validation.

Start validating with Proxymock today: Proxymock Overview