AI Coding Agents Have a UX Problem Nobody Wants to Talk About

The pitch was simple: let AI write your code so you can focus on the hard problems. Three years into the AI coding revolution, and developers are focused on hard problems alright, just not the ones anyone expected. Instead of designing systems and solving business logic, engineers in 2026 spend a startling amount of their day managing the AI itself.

Should you use Fast Mode or Deep Thinking? Haiku or Opus? Cursor or Claude Code or Windsurf? Should you write a SKILL.md file or a custom system prompt? Is your context window too bloated? Did you pick the right MCP server configuration? The industry has a name for this: the thrash.

The Thrash Is Real and It’s Measurable

The numbers paint a clear picture of how fast the industry is moving. In Cursor alone, the ratio of agent users to traditional autocomplete users inverted in under a year, with agent usage growing over 15x. By March 2026, twice as many developers rely on autonomous agents compared to standard autocomplete. The old model of line-by-line suggestions is dead.

But this velocity has a cost. AI-augmented teams are shipping an estimated 126% more projects, but technical debt has risen by 30-41% alongside it, with roughly a 30% increase in change failure rates. Developers are moving fast and breaking things. Increasingly, the things breaking are their own workflows.

The root cause isn’t that the tools are bad. It’s that they demand constant, expert-level decision-making about things that have nothing to do with writing software.

You Now Manage Compute, Not Code

The introduction of variable compute modes across every major platform might be the single biggest UX failure in the current toolchain.

Remember the turbo button on old PCs? One button, two speeds, zero ambiguity. Modern AI tools took that concept and turned it into a graduate-level decision matrix. Every tool now ships with some variant of these tiers:

| Mode | What It Does | The Catch |

|---|---|---|

| Fast Mode | Low-latency, cheap execution for quick edits | High error rate, file corruption, context stripping |

| Deep Thinking | Extended chain-of-thought reasoning for complex tasks | Expensive, slow, unpredictable billing |

| Auto Mode | Black-box router that picks for you | Inconsistent quality, surprise costs |

On paper, this makes sense. Why burn expensive reasoning tokens on a simple rename? In practice, it means every single interaction with your AI coding tool now requires a meta-decision: how hard should the AI think about this?

This is a fundamental UX failure. Developers shouldn’t need to be inference cost analysts. Yet here we are. Engineers on Reddit reporting that Fast Mode “corrupts the files and forces me to revert via Git repeatedly just to make one simple change.” Others discover that Auto Mode routed a critical architectural question to the cheap model, producing subtly broken code that passed CI but failed in production.

The cognitive overhead isn’t theoretical. Each mode switch is a micro-decision that fragments attention and pulls developers out of flow state, the exact state these tools were supposed to protect.

The Model Picker Problem

Layered on top of compute modes is the model selection problem. In 2026, a single IDE might offer you Claude Opus 4.6, Claude Sonnet 4.6, Claude Haiku 4.5, GPT-5.2 Codex, GPT-5.3 Codex, Gemini 3 Flash, and Gemini 3 Pro, sometimes simultaneously.

Each model has different strengths, different pricing, different context windows, and different failure modes. Picking the right one is itself a skill that takes weeks to develop and changes every time a vendor ships an update. One developer described the experience as “driving with three different GPS apps arguing about which exit to take.”

This isn’t a power-user complaint. It’s a fundamental design problem. The tools are pushing infrastructure-level decisions onto people whose job is to build products. A plumber doesn’t choose which water pressure profile to use for each pipe fitting. A developer shouldn’t have to choose which reasoning depth to apply to each prompt.

Wrappers All the Way Down

The platform fragmentation makes it worse. Today’s developer might interact with AI through:

- Cursor: a VS Code fork with cloud agents, Bugbot Autofix, and a custom Tab model

- Claude Code: a CLI-based agent with SKILL.md hot-reloading and context forking

- Google Antigravity: a bifurcated IDE with a separate “Manager Surface” for agent orchestration

- Windsurf: optimized for deep context retrieval in legacy codebases

- GitHub Copilot: Agent Mode backed by Claude 4 Pro with terminal execution

- Terminal wrappers like opencode, aider, or custom MCP configurations

Each platform has its own configuration format, its own context management strategy, its own billing model, and its own opinions about how agents should work. Skills written for one tool may or may not port to another. MCP servers need different configurations per client. The promise of interoperability exists on paper. The Model Context Protocol and Agent-to-Agent protocol are real standards. But in practice, developers still spend hours configuring plumbing instead of writing code.

The result is what Google themselves identified as the primary friction point: long-form prompting disrupts flow state. Their Gemini Code Assist 2.73.0 update tried to solve this with features like “Finish Changes” that infer intent from partial code. But this is treating the symptom, not the disease. The disease is that every tool requires the developer to be an expert operator of that specific tool. The developer experience suffers when the tool itself becomes the bottleneck.

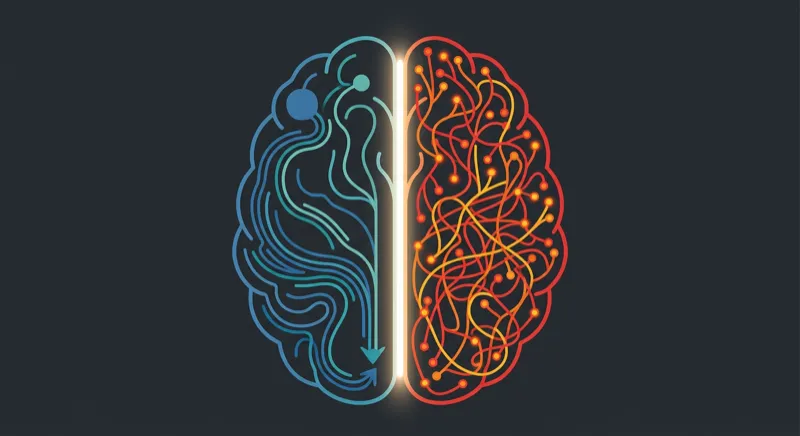

The Neuroscience of Why This Matters

This isn’t just inconvenient. It’s biologically destructive to the kind of thinking that produces good software.

Neuroscience research by Wiehler et al. demonstrates that sustained high-demand cognitive work, like reviewing AI-generated multi-file diffs or deciding which model and mode to use for each task, causes physical glutamate accumulation in the lateral prefrontal cortex. As glutamate builds up, executive control becomes metabolically more expensive to activate. This isn’t a willpower problem. It’s neurochemistry.

Layer on “attention residue,” the cognitive overhead that persists when switching between tasks, and the picture gets worse. Research by Parnin and Rugaber found that in traditional programming, only 10% of sessions begin coding in under a minute. In 93% of cases, developers need significant navigation just to rebuild their mental models. Now multiply those context switches by the number of parallel agents a developer is supervising: one handling authentication, another on billing, a third on infrastructure.

The tools promise 10x productivity. The neuroscience says the constant decision-making and context-switching they demand actively degrades the deep thinking required for good architecture.

What “Set It and Forget It” Would Actually Look Like

The organizations navigating the thrash successfully aren’t giving their developers more choices. They’re giving them fewer. Uber built uReview, a proprietary LLM code review system, specifically because off-the-shelf tools generated too many false positives and couldn’t integrate with their internal platforms. uReview analyzes over 90% of Uber’s 65,000 weekly code diffs with a 75% usefulness rating, and it does it without asking individual developers to pick a model or configure a mode.

The pattern emerging from mature organizations follows a clear principle: the developer shouldn’t have to think about the AI.

What does this look like concretely?

- Opinionated defaults over infinite configuration. Pick one model, one mode strategy, one tool. Encode the decision in a SKILL.md or platform config and stop revisiting it weekly.

- Automated compute routing with guardrails. If you must use Auto Mode, pair it with hard budget caps and real-time telemetry. Don’t let a runaway deep-thinking query drain the monthly budget.

- Agentic review as the first pass. Stop asking humans to review raw AI output. Use a second AI agent, configured once by the platform team, to catch logic flaws, style violations, and security issues before a human ever sees the PR.

- Platform teams own the AI stack. Just as platform engineering absorbed Kubernetes complexity so application developers didn’t have to, Internal Developer Platforms should absorb AI tool complexity. By 2026, analysts predict 80% of software engineering organizations will have dedicated platform teams for exactly this reason.

- Spec-Driven Development over vibe coding. Define what you want built in a specification before the agent touches code. This eliminates the biggest source of wasted tokens and architectural drift: the AI guessing your intent.

The Real Competitive Advantage Is Simplicity

The NIST AI Agent Standards Initiative, launched in February 2026, signals that the industry recognizes the problem. Standards for agent identity, authorization, and interoperability will eventually reduce the configuration surface area. MCP and A2A protocols will mature. Models will get better at self-routing.

But “eventually” doesn’t help the engineering leader who needs to ship product this quarter while their team burns cycles evaluating whether to switch from Cursor to Antigravity.

The competitive advantage in 2026 doesn’t belong to the team using the most sophisticated AI tooling. It belongs to the team that picked a stack, configured it once, and got back to building software. The thrash is real, but it’s not inevitable. The organizations that treat AI tooling like infrastructure, something a platform team manages so developers don’t have to, are the ones that will actually capture the productivity gains everyone was promised.

The best AI coding experience is one you don’t have to think about. We’re not there yet. But the teams that design their workflows around that principle today will be the ones still standing when the dust settles.

If you’re looking for tools that embrace this philosophy, Speedscale’s proxymock takes a set-it-and-forget-it approach to API mocking and testing. One configuration, real production traffic, no model picker required.